Knock Knock

Machine Learning / UIUX / Agriculture

Do you remember when your grandma knocked on watermelons to see if they were ripe? The subtle art and skill of being able to pick out fresh produce in a busy market place is a dying art. Knock Knock is a mobile application that uses machine learning algorithms to re-discover this skill for the next generation.

Concept

Do you remember when your grandma knocked on watermelons to see if they were ripe? The subtle art and skill of being able to pick out fresh produce in a busy market place is a dying art. Exploring sound diagnostics through everyday experience, Knock Knock is a mobile application that uses machine learning algorithms to re-discover this skill for the next generation.

App Flow

Based on a Knock Knock joke, a simple app flow was developed to ease the user journey. The app includes 4 main components: image detection, sound detection, ripeness evaluation and feedback.

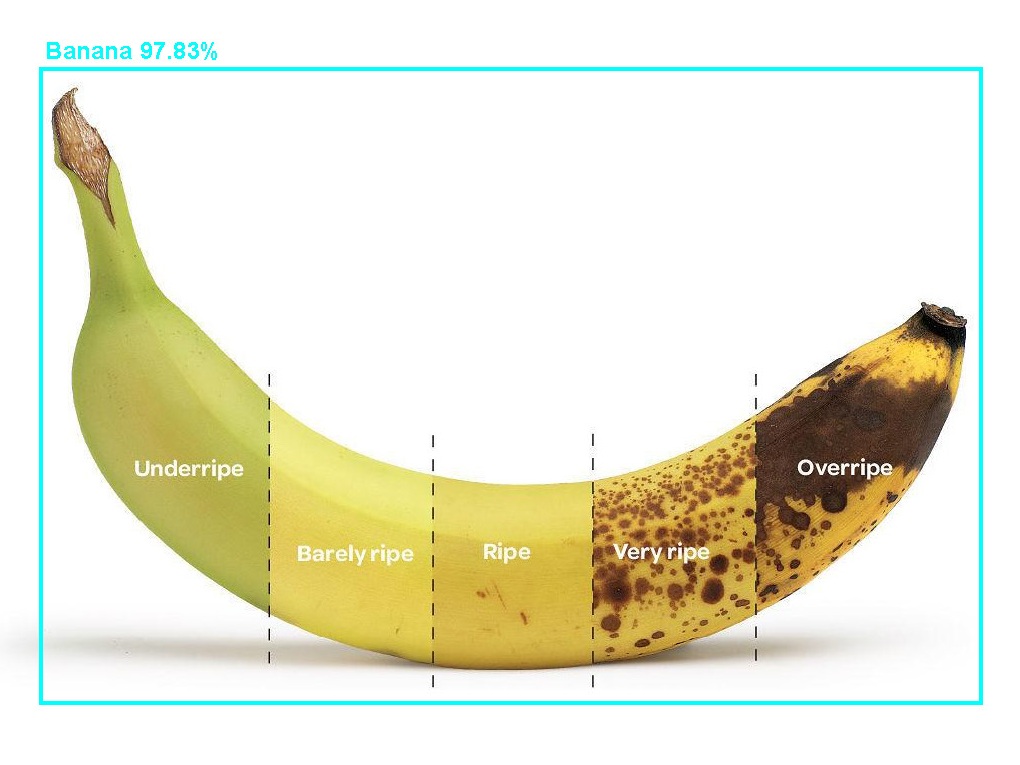

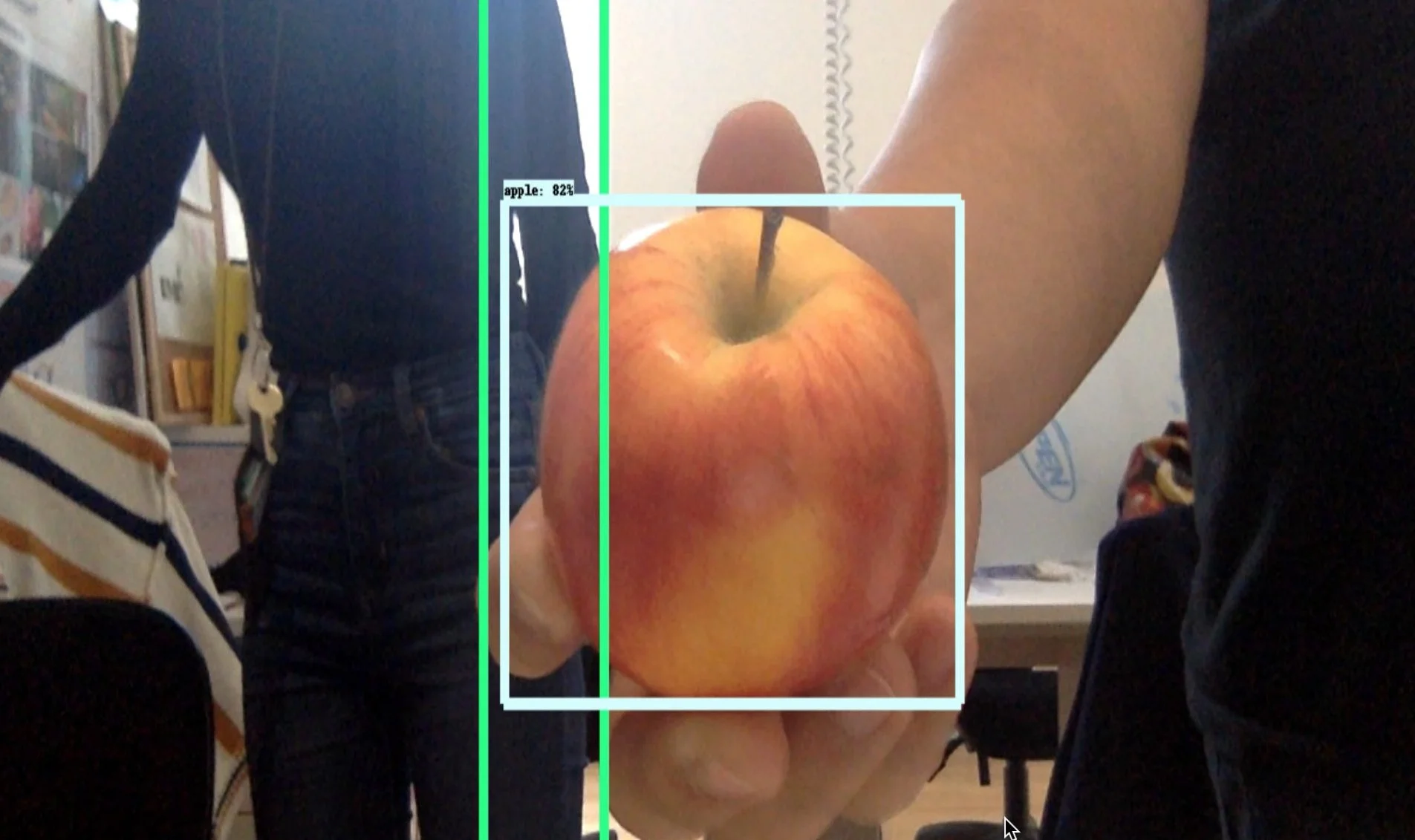

Image Data

Fruits type are classified by color, shape and size. Ripeness is not binary but a scale. As the correlation between how a fruit looks and its ripeness is quite intuitive and visual, we would create an initial data set of labeled image data to then train the sound machine learning algorithm. We validated our idea by training a convolutional neural network model for real time object detection using Tensorflow.

Sound Data

To validate the feasibility of detecting ripeness of watermelons from knocking sounds. We gathered a library of audio files from knocking on objects. The Fast Fourier Transform (FFT) converts knocks of the collected audio files from time to the frequency domain. This show a promising pattern that could be used for further classification.